Written by Thomas Graf, Paolo Lucente, and Benoit Claise.

Back from the IETF 125 in Shenzhen, we wanted to take a moment to answer a recurring question in the world of YANG-based telemetry: IETF YANG-Push or gRPC/gNMI? I attempted to answer this question about six years ago with this “Model-driven Telemetry: IETF YANG Push and/or Openconfig Streaming Telemetry?” blog post. The IETF specifications (RFC 8639 to RFC 8641) cover configured or dynamically subscriptions, over transports such as NETCONF or RESTCONF. My conclusion at the time was: “In the end, the market will decide!”.

It’s time to revisit this “YANG-Push versus gRPC/gNMI” question at the time the three of us (Thomas, Paolo and I writing this blog) and many more have been pushing the industry for data model-driven telemetry at the IETF. So asked differently: “Since you are pushing the IETF YANG-Push, what are the advantages compared to gRPC/gNMI?”.

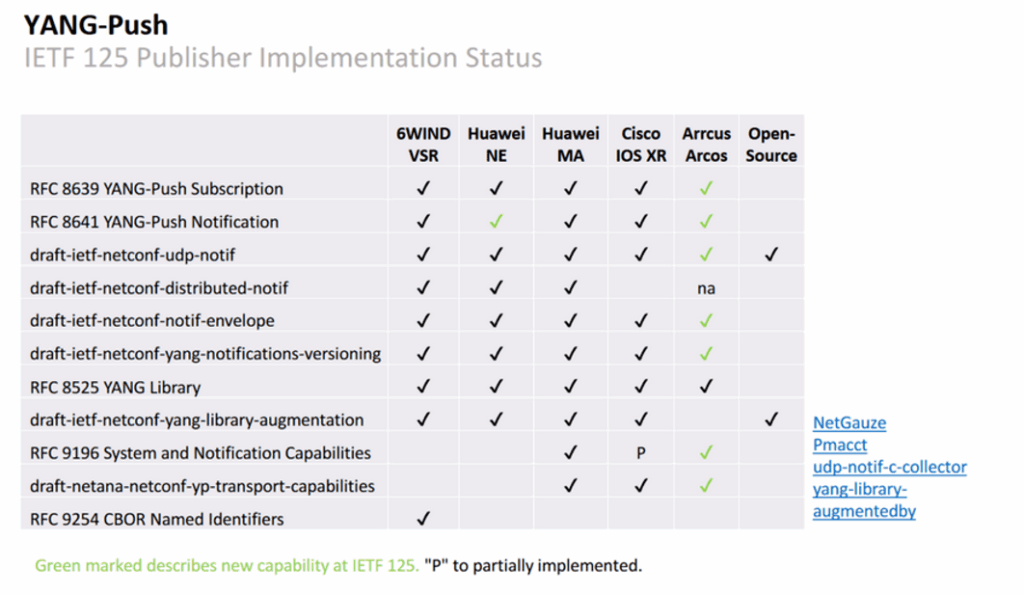

If you look at IETF YANG-Push today, it is quite different than the YANG-Push six years ago, as proven by the results of the IETF 125 hackathon last week in Shenzhen:

The biggest IETF YANG-Push innovation in my mind is the streaming over UDP, mimicking the successful IPFIX protocol, where many many flow records are sent to an anycast IP address, as a single IP address for all the IPFIX collector. And this IPFIX scales! Exactly like IPFIX for years, YANG-Push streams now directly from the line cards/NPUs (with draft-ietf-netconf-distributed-notif), as opposed to initiates/terminates on the Route Processor (like NETCONF, RESTCONF, gPRC, gNMI). On top of that, we augmented the IETF YANG-Push with the precise observation timestamp, metadata such as sender ID and sequence number, and the support of the YANG module revision … all these to facilitate the integration of the telemetry data with other sources of information: IPFIX, BMP, topology (Service & Infrastructure Maps – SIMAP), service assurance (SAIN), configuration, etc. Think big, think about all the required building blocks to solve the closed loop. And finally, there is the CBOR binary encoding for very compact encoding, and reduced export bandwidth requirements.

Back to basics of telemetry for a second! Network operators want to subscribe to YANG modelled metrics because:

- Particular data is not supported in SNMP.

- It allows sub 5 min granularity at the same cost due to its push based approach.

- Configuration is done with YANG models, so operational metrics must use the same modeling language (see Why Data Model-driven Telemetry is the only useful Telemetry?)

Since we now established why a network operator moves from SNMP to YANG, let’s now understand the differences between gRPC/gNMI/vendor specific and IETF YANG-Push.

- YANG-Push (RFCs 8639–8641) is the IETF’s answer to push-based telemetry. It extends NETCONF and RESTCONF — existing network management protocols — by adding a subscription mechanism so devices can stream data to collectors rather than waiting to be polled. It’s domain-specific by design: the data model is always YANG, and the data is network state (interfaces, routing tables, QoS counters, etc.).

- gRPC is Google’s general-purpose RPC framework, language- and domain-agnostic. For networking specifically, the relevant standard is gNMI (gRPC Network Management Interface), an OpenConfig specification that uses gRPC as its transport. The combination of gRPC + gNMI is the practical competitor to YANG-Push.

Even though we use gRPC/gNMI as a single term, it is actually multiple versions and multiple vendor implementations — and that distinction matters operationally. Three problems compound each other:

- Fragmentation. Before, with SNMP, a vendor had a single data processing chain. Now, with gRPC/gNMI/vendor-specific implementations, that same vendor needs multiple chains — one per OS, sometimes one per OS version. Cisco alone has different proto files for IOS-XR, IOS-XE, and NX-OS. Each vendor encodes metadata differently. What can be automated is therefore limited.

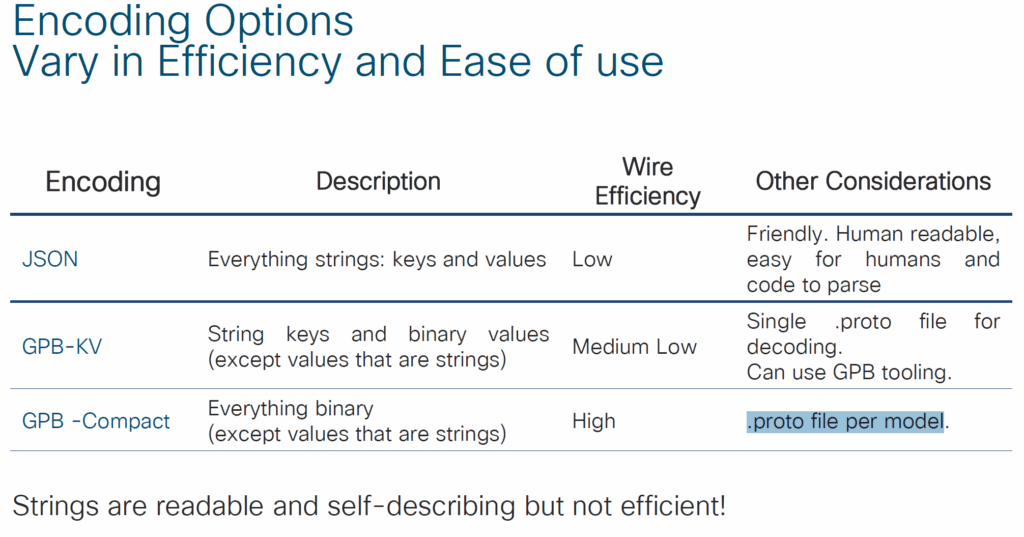

- No self-describing schema. With SNMP, MIB modules described the schema of the metric directly, enabling automated onboarding and maintenance of the data processing chain. With gRPC/gNMI/vendor-specific, we lost that. A collector receiving a GPB-encoded stream has no built-in way to discover what the data means without an out-of-band schema. In GPB-compact mode specifically, you need one compiled

.protofile per YANG module — and there are thousands of YANG modules. - YANG complexity. YANG is a richer language than SMI/MIB: it allows nested data structures, augmentations, and deviations. Not all components in the data processing chain — stream processors, time series databases — are capable of digesting that nesting natively without transformation work.

In other words, SNMP is cheap and established. YANG is expensive to operate and new.

An Architecture for YANG-Push to Message Broker Integration addresses a large part the problem, as mentioned in its introduction:

Nowadays network operators are using YANG [RFC7950] to model their configurations and obtain YANG modelled data from their networks. It is well understood that plain text are initially intended for humans and need effort to make it machine readable due to the lack of semantics. YANG modeled data is addressing most of these needs.

Increasingly more network operators organizing their data in a Data Mesh [Deh22] where a Message Broker such as Apache Kafka [Kaf11] or Apache Pulsar [Pul16] facilitates the exchange of messages among data processing components like a stream processor to filter, enrich, correlate or aggregate, or a time series database to store data.

Even though YANG is intended to ease the handling of data, this promise has not yet been fulfilled for Network Telemetry [RFC9232]. From subscribing on a YANG datastore, publishing a YANG modeled notifications message from the network and viewing the data in a time series database, manual labor, such as obtaining the YANG schema from the network and creating a transformation or ingestion specification into a time series database, is needed to make a Message Broker and its data processing components with YANG notifications interoperable. Since YANG modules can change over time, for example when a router is being upgraded to a newer software release, this process needs to be adjusted continuously, leading often to errors in the data chain if dependencies are not properly tracked and schema changes adjusted simultaneously.

There are four main objectives for native YANG-Push notifications and YANG Schema integration into a Message Broker, as described in the Motivation section:

3.1. Automatic Onboarding

Automate the Data Mesh onboarding of newly subscribed YANG metrics.

3.2. Preserve Schema

The preservation of the YANG schema, that includes the YANG data

types as defined in [RFC6991] and the nested structure of the YANG

module, the data taxonomy, throughout the data processing chain

ensures that metrics can be processed and visualized as they were

originally intended. Not only for users but also for automated

closed loop operation actions.

3.3. Preserve Semantic Information

[RFC7950] defines in Section 7.21.3 and 7.21.4 the description and

reference statement. This information is intended for the user,

describing in a human-readable fashion the meaning of a definition.

In Data Mesh, this information can be imported from the YANG Schema

Registry into a Stream Catalog where subjects within Message Broker

are identifiable and searchable. An example of a Stream Catalog is

Apache Atlas [Atl15]. It can also be applied for time series data

visualization in a similar fashion.

3.4. Standardize Data Processing Integration

Since the YANG Schema is preserved for operational metrics in the

Message Broker, a standardization for integration between network

data collection and stream processors or time series databases is

implied.

In a nutshell, we need to make YANG easily consumable. Like going to a restaurant, I like the menu 1 with soup on the side et voilà.

In order to make YANG easily consumable we need machine readable API’s

- Discover (let’s have a look at the menu):

- YANG Modules Describing Capabilities for Systems and Datastore Update Notifications (RFC 9196 with ietf-system-capabilities, ietf-notification-capabilities

- YANG Notification Transport Capabilities (draft-ietf-netconf-yp-transport-capabilities)

- Describe (this is the recipe):

- Subscribe (placing the order):

- ietf-subscribed-notifications RFC 8639:

- Deliver (receiving the plate)

- ietf-yang-push and friends

gRPC/gNMI/Vendor specific does have a Capabilities RPC, which provides a basic form of Discover — but it stops there. Describe is absent: there is no standardized way to retrieve the full YANG schema, its semantics, or its revision history from the device. Subscribe and Deliver work, but without the foundation that Discover and Describe provide, what you get is a pipeline that streams data you cannot fully automate around.. In other words, it’s still under development. Not finished. gRPC/gNMI/openconfig tried to establish a standardization body. However, from vendor to vendor and from operating system version to operating system version, the API and models are not the same, as there is no standardized schema compilation across vendors.

Not convinced yet, look at the different proto files, from just a few vendors :

- Cisco NX-OS → https://github.com/cisco-ie/nx-os-grpc-python

- Cisco IOS-XE → https://github.com/cisco-ie/cisco-proto/tree/master/proto/xe

- Cisco IOS-XR → https://github.com/CiscoDevNet/grpc-getting-started and https://github.com/ios-xr/model-driven-telemetry

- Juniper → https://github.com/Juniper/jtimon/blob/master/telemetry/telemetry.proto and https://github.com/Juniper/telemetry

- Huawei → https://github.com/HuaweiDatacomm/proto

- Nokia → https://github.com/nokia/7X50_protobufs

And the fragmentation goes deeper than just vendor-to-vendor. Huawei alone ships three different .proto files just for the transport and subscription plumbing — huawei-grpc-dialout.proto, huawei-grpc-dialin.proto, and huawei-telemetry.proto — before you even add one .proto file per monitored feature.

And for GPB-compact mode, the situation is even worse: you need one compiled .proto file per YANG module. Given that there are thousands of YANG modules across vendors, that means thousands of schema files to compile, version, and maintain in sync with every router software upgrade. Try automating that.

There is one more operational risk that rarely gets the attention it deserves: silent breakage. When a vendor upgrades a router’s software and updates a YANG module — adding a leaf, renaming a container, changing a type — a gNMI collector has no built-in way to know the schema changed. The subscription keeps flowing, the pipeline keeps running, and the data silently becomes wrong. You only notice when someone looks at a dashboard and something doesn’t add up. This is not a theoretical risk; it happens in production today. draft-ietf-netconf-yang-notifications-versioning solves this directly: every notification carries the exact YANG module revision that produced it, so the collector can detect a schema change the moment it happens and react — automatically. That is the difference between a system you can operate at scale and one you are constantly babysitting.

We need standardization to reduce effort and cost in maintenance and increase quality for maintainability. In other words, once YANG is easily consumable and transformable because we have machine readable API’s and standardization in Discover, Describe, Subscribe and Deliver with well defined schema and semantics we reduced the price to operate and reduce the time for creating next generation Network Analytics applications such as Network Anomaly Detection. It’s absolutely required to being capable to fully automate and evolve in a standardized fashion.

If you take IPFIX as an example, you configure the flow monitoring on the routers and after a few seconds, the first flow records arrive on the collector; why should it be more difficult for YANG-based telemetry?

Granted, openconfig did a great job to deliver tools at the same time as the gRPC/gNMI implementation and this has been a real advantage. Now, we start to see those tools developed for IETF YANG-Push, as well as router vendor implementations.

Six years ago I said “the market will decide”. Well, I think the market is deciding — and the direction is clear. Multiple major vendors implementing the full stack at a single IETF hackathon is not early adoption noise anymore. True, the tooling ecosystem around gRPC/gNMI is still more mature today, and openconfig deserves credit for building tools alongside the protocol from day one. We are catching up on that front, as the open-source implementations listed above show. But on the fundamentals — transport flexibility, distributed publishing, schema versioning, automatic onboarding — IETF YANG-Push is the more complete answer. gRPC/gNMI solved the urgent problem first. IETF YANG-Push is solving the complete problem. Fun fact: it’s like Didi that doesn’t drive to Macao, gRPC/gNMI only gets you half of the way there 🙂

If you are following the most recent YANG-Push to Message Broker presentations at NMOP you will notice that we are slowly moving up the chain. We are now at improving the YANG feature coverage in YANG libraries to ensure that message validation and automated transformation is not only a possibility but it is a reality regardless if it is C, Rust or Java. Stay tuned for IETF 126.